| |

Yes, the EU's New #CopyrightDirective is All About Filters. By Cory Doctorow

|

|

|

| 30th November 2018

|

|

| See CC article from eff.org |

When the EU started planning its new Copyright Directive (the "Copyright in the Digital Single Market Directive"), a group of powerful entertainment industry lobbyists pushed a terrible idea: a mandate that all online platforms would have

to create crowdsourced databases of "copyrighted materials" and then block users from posting anything that matched the contents of those databases. At the time, we, along with academics and technologists explained why

this would undermine the Internet, even as it would prove unworkable. The filters would be incredibly expensive to create, would erroneously block whole libraries' worth of legitimate materials, allow libraries' more worth of infringing materials to slip

through, and would not be capable of sorting out "fair dealing" uses of copyrighted works from infringing ones. The Commission nonetheless included it in their original draft. Two years later, after the European

Parliament went back and forth on whether to keep the loosely-described filters, with German MEP Axel Voss finally squeezing a narrow victory in his own committee, and an emergency vote of the whole Parliament. Now, after a lot of politicking and

lobbying, Article 13 is potentially only a few weeks away from becoming officially an EU directive, controlling the internet access of more than 500,000,000 Europeans. The proponents of Article 13 have a problem, though: filters

don't work, they cost a lot, they underblock, they overblock, they are ripe for abuse (basically, all the objections the Commission's experts raised the first time around). So to keep Article 13 alive, they've spun, distorted and obfuscated its

intention, and now they can be found in the halls of power, proclaiming to the politicians who'll get the final vote that "Article 13 does not mean copyright filters." But it does. Here's a list

of Frequently Obfuscated Questions and our answers. We think that after you've read them, you'll agree: Article 13 is about filters, can only be about filters, and will result in filters.

Article 13 is about filtering, not "just" liability Today, most of the world (including the EU) handles copyright infringement with some sort of takedown process. If you provide the public

with a place to publish their thoughts, photos, videos, songs, code, and other copyrightable works, you don't have to review everything they post (for example, no lawyer has to watch 300 hours of video every minute at YouTube before it goes live).

Instead, you allow rightsholders to notify you when they believe their copyrights have been violated and then you are expected to speedily remove the infringement. If you don't, you might still not be liable for your users' infringement, but you lose

access to the quick and easy 'safe harbor' provided by law in the event that you are named as part of any copyright lawsuit (and since the average internet company has a lot more money than the average internet user, chances are you will be named

in that suit). What you're not expected to be is the copyright police. And in fact, the EU has a specific Europe-wide law that stops member states from forcing Internet services from having to play this role: the same rule that defines the limits

of their liability, the E-Commerce Directive, in the very next article, prohibits a "general obligation to monitor." That's to stop countries from saying "you should know that your users are going to break some law, some time, so

you should actively be checking on them all the time -- and if you don't, you're an accomplice to their crimes." The original version of Article tried to break this deal, by re-writing that second part. Instead of a prohibition on monitoring, it required

it, in the form of a mandatory filter. When the European Parliament rebelled against that language, it was because millions of Europeans had warned them of the dangers of copyright filters. To bypass this outrage, Axel Voss

proposed an amendment to the Article that replaced an explicit mention of filters, but rewrote the other part of the E-Commerce directive. By claiming this "removed the filters", he got his amendment passed -- including by gaining votes

by MEPs who thought they were striking down Article 13.Voss's rewrite says that sharing sites are liable unless they take steps to stop that content before it goes online. So yes, this is about liability, but it's also

about filtering. What happens if you strip liability protections from the Internet? It means that services are now legally responsible for everything on their site. Consider a photo-sharing site where millions of photos are posted every hour. There

are not enough lawyers -- let alone copyright lawyers -- let alone copyright lawyers who specialise in photography -- alive today to review all those photos before they are permitted to appear online. Add to that all the

specialists who'd have to review every tweet, every video, every Facebook post, every blog post, every game mod and livestream. It takes a fraction of a second to take a photograph, but it might take hours or even days to ensure that everything the photo

captures is either in the public domain, properly licensed, or fair dealing. Every photo represents as little as an instant's work, but making it comply with Article 13 represents as much as several weeks' work. There is no way that Article 13's purpose

can be satisfied with human labour. It's strictly true that Axel Voss's version of Article 13 doesn't mandate filters -- but it does create a liability system that can only be satisfied with filters.

But there's more: Voss's stripping of liability protections has Big Tech like YouTube scared, because if the filters aren't perfect, they will be potentially liable for any infringement that gets past them -- and given their billions,

that means anyone and everyone might want to get a piece of them. So now, YouTube has started lobbying for the original text, copyright filters and all. That text is still on the table, because the trilogue uses both Voss' text (liability to get filters)

and member states' proposal (all filters, all the time) as the basis for the negotiation.

Most online platforms cannot have lawyers review all the content they make available The only online services that can have lawyers review their content are services for delivering relatively small

libraries of entertainment content, not the general-purpose speech platforms that make the Internet unique. The Internet isn't primarily used for entertainment (though if you're in the entertainment industry, it might seem that way): it is a digital

nervous system that stitches together the whole world of 21st Century human endeavor. As the UK Champion for Digital Inclusion discovered when she commissioned a study of the impact of Internet access on personal life, people use the Internet to do

everything, and people with Internet access experience positive changes across their lives : in education, political and civic engagement, health, connections with family, employment, etc. The job we ask, say, iTunes and Netflix

to do is a much smaller job than we ask the online companies to do. Users of online platforms do sometimes post and seek out entertainment experiences on them, but as a subset of doing everything else: falling in love, getting and keeping a job,

attaining an education, treating chronic illnesses, staying in touch with their families, and more. iTunes and Netflix can pay lawyers to check all the entertainment products they make available because that's a fraction of a slice of a crumb of all the

material that passes through the online platforms. That system would collapse the instant you tried to scale it up to manage all the things that the world's Internet users say to each other in public.

It's impractical for users to indemnify the platforms Some Article 13 proponents say that online companies could substitute click-through agreements for filters, getting users to pay them back for

any damages the platform has to pay out in lawsuits. They're wrong. Here's why. Imagine that every time you sent a tweet, you had to click a box that said, "I promise that this doesn't infringe copyright and I will pay

Twitter back if they get sued for this." First of all, this assumes a legal regime that lets ordinary Internet users take on serious liability in a click-through agreement, which would be very dangerous given that people do not have enough hours in

the day to read all of the supposed 'agreements' we are subjected to by our technology. Some of us might take these agreements seriously and double-triple check everything we posted to Twitter but millions more wouldn't, and they

would generate billions of tweets, and every one of those tweets would represent a potential lawsuit. For Twitter to survive those lawsuits, it would have to ensure that it knew the true identity of every Twitter user (and how to

reach that person) so that it could sue them to recover the copyright damages they'd agreed to pay. Twitter would then have to sue those users to get its money back. Assuming that the user had enough money to pay for Twitter's legal fees and the fines it

had already paid, Twitter might be made whole... eventually. But for this to work, Twitter would have to hire every contract lawyer alive today to chase its users and collect from them. This is no more sustainable than hiring every copyright lawyer alive

today to check every tweet before it is published.

Small tech companies would be harmed even more than large ones It's true that the Directive exempts "Microenterprises and small-sized enterprises" from Article 13, but that doesn't mean

that they're safe. The instant a company crosses the threshold from "small" to "not-small" (which is still a lot smaller than Google or Facebook), it has to implement Article 13's filters. That's a multi-hundred-million-dollar tax on

growth, all but ensuring that the small Made-in-the-EU competitors to American Big Tech firms will never grow to challenge them. Plus, those exceptions are controversial in the Trilogue, and may disappear after yet more rightsholder lobbying.

Existing filter technologies are a disaster for speech and innovation ContentID is YouTube's proprietary copyright filter. It works by allowing a small, trusted cadre of rightsholders to claim works

as their own copyright, and limits users' ability to post those works according to the rightsholders' wishes, which are more restrictive than what the law's user protections would allow. ContentID then compares the soundtrack (but not the video

component) of any user uploads to the database to see whether it is a match. Everyone hates ContentID. Universal and the other big rightsholders complain loudly and frequently that ContentID is too easy for infringers to bypass.

YouTube users point out that ContentID blocks all kind of legit material, including silence , birdsong , and music uploaded by the actual artist for distribution on YouTube . In many cases, this isn't a 'mistake,' in the sense that Google has agreed to

let the big rightsholders block or monetize videos that do not infringe any copyright, but instead make a fair use of copyrighted material. ContentID does a small job, poorly: filtering the soundtracks of videos to check for

matches with a database populated by a small, trusted group. No one (who understands technology) seriously believes that it will scale up to blocking everything that anyone claims as a copyrighted work (without having to show any proof of that claim or

even identify themselves!), including videos, stills, text, and more.

Online platforms aren't in the entertainment business The online companies most impacted by Article 13 are platforms for general-purpose communications in every realm of human endeavor, and if we try

to regulate them like a cable operator or a music store, that's what they will become.

The Directive does not adequately protect fair dealing and due process Some drafts of the Directive do say that EU nations should have "effective and expeditious complaints and redress

mechanisms that are available to users" for "unjustified removals of their content. Any complaint filed under such mechanisms shall be processed without undue delay and be subject to human review. Right holders shall reasonably justify their

decisions to avoid arbitrary dismissal of complaints." What's more, "Member States shall also ensure that users have access to an independent body for the resolution of disputes as well as to a court or another relevant

judicial authority to assert the use of an exception or limitation to copyright rules." On their face, these look like very good news! But again, it's hard (impossible) to see how these could work at Internet scale. One of

EFF's clients had to spend ten years in court when a major record label insisted -- after human review, albeit a cursory one-- that the few seconds' worth of tinny background music in a video of her toddler dancing in her kitchen infringed copyright. But

with Article 13's filters, there are no humans in the loop: the filters will result in millions of takedowns, and each one of these will have to receive an "expeditious" review. Once again, we're back to hiring all the lawyers now alive -- or

possibly, all the lawyers that have ever lived and ever will live -- to check the judgments of an unaccountable black box descended from a system that thinks that birdsong and silence are copyright infringements. It's pretty clear

the Directive's authors are not thinking this stuff through. For example, some proposals include privacy rules: "the cooperation shall not lead to any identification of individual users nor the processing of their personal data." Which is

great: but how are you supposed to prove that you created the copyrighted work you just posted without disclosing your identity? This could not be more nonsensical if it said, "All tables should weigh at least five tonnes and also be easy to lift

with one hand."

The speech of ordinary Internet users matters Eventually, arguments about Article 13 end up here: "Article 13 means filters, sure. Yeah, I guess the checks and balances won't scale. OK, I guess

filters will catch a lot of legit material. But so what? Why should I have to tolerate copyright infringement just because you can't do the impossible? Why are the world's cat videos more important than my creative labour?" One thing about this argument: at least it's honest. Article 13 pits the free speech rights of every Internet user against a speculative theory of income maximisation for creators and the entertainment companies they ally themselves with: that filters will create revenue for them.

It's a pretty speculative bet. If we really want Google and the rest to send more money to creators, we should create a Directive that fixes a higher price through collective licensing. But let's take a

moment here and reflect on what "cat videos" really stand in for here. The personal conversations of 500 million Europeans and 2 billion global Internet users matter : they are the social, familial, political and educational discourse of

a planet and a species. They have worth, and thankfully it's not a matter of choosing between the entertainment industry and all of that -- both can peacefully co-exist, but it's not a good look for arts groups to advocate that everyone else shut up and

passively consume entertainment product as a way of maximising their profits.

|

| |

10 years in Chinese prison for writing a homoerotic novel

|

|

|

| 30th November 2018

|

|

| See CC article from hrw.org by Greme Reid

|

In an assault on freedom of expression, a court in China sentenced a successful novelist, Ms. Liu, to 10 years in prison on October 31 for including explicit homoerotic content in her work. The charge against her was making and selling obscene material

for profit. Information about the case has just recently been circulated online, generating a widespread outcry on social media against censorship as well as the disproportionate and excessive severity of her sentence. The writer,

who uses the pen name Tianyi, was arrested in 2017, after the publication of her novel Occupy . Pornography is illegal in China . The 1997 penal code forbids depicting sexual acts except for medical or artistic purposes. According to police in

Anhui Province, in eastern China, the book described obscene behavior between males, including violence, abuse, and humiliation. ...See the full

article from hrw.org

|

| |

India ups the ante with 12500 websites blocked to protect a local blockbuster movie

|

|

|

| 30th November 2018

|

|

| See CC article from torrentfreak.com

|

The Madras High Court has handed down one of the most aggressive site-blocking orders granted anywhere in the world. Following an application by Lyca Productions , more than 12,500 sites will be preemptively blocked by 37 Indian ISPs to prevent 2.0

- India's most expensive film ever - being leaked following its premiere. What we're looking at here is a preemptive blocking order of a truly huge scale against sites that have not yet made the movie available and may never do so. In the

meantime, however, a valuable lesson about site-blocking is already upon us. Within hours of the blocks being handed down, a copy of 2.0 appeared online and is now available via various torrent and streaming sites labeled as a 1080p PreDVDRip. Forums

reviewed by TF suggest users aren't having a problem obtaining it. With a reported budget of US$76 million, 2.0 is the most expensive Indian film. The sci-fi flick is attracting huge interest and at one stage it was reported that Arnold

Schwarzenegger had been approached to play a leading role in the flagship production. |

| |

The US film censor is not impressed by a special one night screening of the uncut version

|

|

|

| 29th November 2018

|

|

| See article from

bloody-disgusting.com |

The House That Jack Built is a 2018 Denmark / France / Germany / Sweden horror thriller by Lars von Trier.

Starring Matt Dillon, Bruno Ganz and Uma Thurman.

Lars von Trier's upcoming drama follows the highly intelligent Jack (Matt Dillon) over a span of 12 years and introduces the murders that define Jack s development as a serial killer. We experience the story from

Jack s point of view, while he postulates each murder is an artwork in itself. As the inevitable police intervention is drawing nearer, he is taking greater and greater risks in his attempt to create the ultimate artwork. The MPAA

is Going After distributors IFC over a one day special screening of the Director's Cut of The House That Jack Built. Ahead of the general release of the cut R rated version of Lars von Trier's new film on December 14, the Director's Cut of the

film played select theaters, for one night only, and it looks like those screenings have landed IFC Films in trouble with the US film censors of the MPAA. The MPAA has rules allowing only one version of a film to be shown in cinemas at a time.

Ratings can in fact be changed but only after a certain time has elapsed, and with the previous rating being revoked. As reported by Deadline, IFC now faces potential sanctions over the screenings. The MPAA said in a statement that they have:

Communicated to the distributor, IFC Films, that the screening of an unrated version of the film in such close proximity to the release of the rated version -- without obtaining a waiver -- is in violation of the rating

system's rules. The effectiveness of the MPAA ratings depends on our ability to maintain the trust and confidence of American parents. That's why the rules clearly outline the proper use of the ratings. Failure to comply with the rules can create

confusion among parents and undermine the rating system -- and may result in the imposition of sanctions against the film's submitter.

A hearing in the very near future will allow IFC to plead their case, and it's possible that the

MPAA could revoke the rating they had issued to the film. |

| |

The EFF is opposing the censorship of film stars ages

|

|

|

| 29th November

2018

|

|

| See article from eff.org

|

California is still trying to gag websites from sharing true, publicly available, newsworthy information about actors. While this effort is aimed at the admirable goal of fighting age discrimination in Hollywood, the law unconstitutionally punishes

publishers of truthful, newsworthy information and denies the public important information it needs to fully understand the very problem the state is trying to address. So we have once again filed a friend of the court brief opposing that effort.

The case, IMDB v. Becerra , challenges the constitutionality of California Civil Code section 1798.83.5 , which requires "commercial online entertainment employment services providers" to remove an actor's date of birth or

other age information from their websites upon request. The purported purpose of the law is to prevent age discrimination by the entertainment industry. The law covers any "provider" that "owns, licenses, or otherwise possesses

computerized information, including, but not limited to, age and date of birth information, about individuals employed in the entertainment industry, including television, films, and video games, and that makes the information available to the public or

potential employers." Under the law, IMDb.com, which meets this definition because of its IMDb Pro service, would be required to delete age information from all of its websites, not just its subscription service. We filed a

brief in the trial court in January 2017, and that court granted IMDb's motion for summary judgment, finding that the law was indeed unconstitutional. The state and the Screen Actors Guild, which intervened in the case to defend the law, appealed the

district court's ruling to the U.S. Court of Appeals for the Ninth Circuit. We have now filed an amicus brief with that court. We were once again joined by First Amendment Coalition, Media Law Resource Center, Wikimedia Foundation, and Center for

Democracy and Technology. As we wrote in our brief, and as we and others urged the California legislature when it was considering the law, the law is clearly unconstitutional. The First Amendment provides very strong protection to

publish truthful information about a matter of public interest. And the rule has extra force when the truthful information is contained in official governmental records, such as a local government's vital records, which contain dates of birth.

This rule, sometimes called the Daily Mail rule after the Supreme Court opinion from which it originates, is an extremely important free speech protection. It gives publishers the confidence to publish important information

even when they know that others want it suppressed. The rule also supports the First Amendment rights of the public to receive newsworthy information. Our brief emphasizes that although IMDb may have a financial interest in

challenging the law, the public too has a strong interest in this information remaining available. Indeed, if age discrimination in Hollywood is really such a compelling issue, and EFF does not doubt that it is, hiding age information from the public

makes it difficult for people to participate in the debate about alleged age discrimination in Hollywood, form their own opinions, and scrutinize their government's response to it.

|

| |

Chinese Disneyland looks set to be purged of its Winnie the Pooh rides

|

|

|

| 29th November 2018

|

|

| See article from inquisitr.com

|

One of the most beloved Disney characters seems to be on the way out at the Shanghai Disneyland and it all has to do with the Chinese president getting all wound up by mild joke. A report has come out that Winnie the Pooh and virtually all

references to the bear may be taken out of the park in Shanghai. That would include removing all merchandise, having no character meet-and-greet, and reworking two rides to different themes. One of the rides is The Many Adventures of Winnie the Pooh dark

ride and the other is a spinning teacup ride called Pooh's Hunny Pot Spin. According to a report from Theme Park University, this would all be at the command of Chinese President Xi Jinping who took umbrage at the jokey cartoon comparison of Xi

Jinping and Winnie the Pooh. |

| |

|

|

|

| 29th November 2018

|

|

|

Fake news prevention presents huge business opportunity. By Ryan Holmes See article from

business.financialpost.com |

| |

Miserable gits on the local council want to close down cafe over its name

|

|

|

| 28th November 2018

|

|

| See

article from independent.co.uk

|

The owners of a cafe in Cornwall have say they face closure over their business's slightly suggestive name. Kevin and Laura Baker said they could lose everything after miserable councillors lodged objections to their roadside sandwich shop, Nice

Baps. They opened the cafe five years ago in a shipping container revamped to look like a log cabin on a layby of the A39 outside Wadebridge. But the Bakers said their livelihood was now jeopardy after two parish councillors objected to them

renewing their street trading licence.They said they were told by a licensing officer that the councillors took issue with the name and size of the cafe. Egloshayle Parish Council denied that the cafe's name was a problem and claim that the

concerns were about the business breaching its licensing agreement. The Bakers will now face a Cornwall Council licensing hearing next month. Support free-thinking journalism and subscribe to Independent Minds. They responded to the parish council

claim: If we had breached one of the conditions of our license, why have we not had a letter to say can you do something to change it?

|

| |

But ASA's advert censors kindly averted their eyes and only noticed Kelly Brook's shoes

|

|

|

| 28th November 2018

|

|

| See article from asa.org.uk

See

video from YouTube |

A TV and VOD ad for Skechers seen in August 2018:

- a. The TV ad for Skechers seen on 30 August 2018 featured TV presenter Kelly Brook walking along a pavement wearing a jumper and jeans. She says, I like my clothes form fitting, but not my shoes. That is why I wear Skechers

knitted footwear. So I look and feel my best. People tend to notice things like that. In the same shot a man carrying a box of oranges was distracted by Kelly Brook and crashed in to his colleague causing them both to drop the contents of the boxes they

were carrying. A male cyclist passed the TV presenter and looks back at her.

- b. The VOD ad was the same as ad (a). Issue

Three complainants questioned whether the ad was offensive because it objectified women. ASA Assessment: Complaints not upheld The ad featured the TV

presenter, wearing a jumper with jeans and trainers, walking down a high street. The ASA considered that the outfit was not revealing and nothing about Kelly Brook's behaviour was sexualised or objectifying. We noted that the men in the ad did notice the

presenter and that their reactions in doing so were exaggerated. However, we did not consider that the ad contained anything which pointed to an exploitative scenario or tone. We concluded that the ad did not objectify or degrade women and therefore was

not socially irresponsible and unlikely to cause serious or widespread offence. |

| |

Spanish comedian in court after he blows his nose on the country's flag

|

|

|

| 28th November 2018

|

|

| See article from chortle.co.uk

See

video from YouTube |

A Spanish comedian has been hauled in front of a judge for blowing his nose on the national flag on TV. Dani Mateo could be prosecuted for offence of public affront to the symbols of Spain, which comes with a fine, or for carrying out a hate

crime, which carries a maximum sentence of four years in jail. The complaint was brought by a trade union representing police officers. They protested over a sketch on satirical news show El Intermedio, broadcast on the La Sexta channel last

month, in which Mateo joked that he was going to read the only text that genuinely creates consensus in Spain: the patient guidelines in a packet of Frenadol. But as he read the instructions on the cold remedy, he pretended to sneeze, and blew his nose

on the Spanish flag. He joked: Christ, sorry! I didn't want to offend anyone. I didn't want to offend Spaniards, nor the king, nor the Chinese who sell these rags. Not rags, I didn't mean rags.

|

| |

Google employees write open letter opposing Google supporting the Chinese internet censorship regime

|

|

|

| 28th November 2018

|

|

| See open letter at medium.com

|

We are Google employees. Google must drop Dragonfly. We are Google employees and we join Amnesty International in calling on Google to cancel project Dragonfly, Google's effort to create a censored search engine for the

Chinese market that enables state surveillance. We are among thousands of employees who have raised our voices for months. International human rights organizations and investigative reporters have also sounded the alarm,

emphasizing serious human rights concerns and repeatedly calling on Google to cancel the project. So far, our leadership's response has been unsatisfactory. Our opposition to Dragonfly is not about China: we object to technologies

that aid the powerful in oppressing the vulnerable, wherever they may be. The Chinese government certainly isn't alone in its readiness to stifle freedom of expression, and to use surveillance to repress dissent. Dragonfly in China would establish a

dangerous precedent at a volatile political moment, one that would make it harder for Google to deny other countries similar concessions. Our company's decision comes as the Chinese government is openly expanding its surveillance

powers and tools of population control. Many of these rely on advanced technologies, and combine online activity, personal records, and mass monitoring to track and profile citizens. Reports are already showing who bears the cost, including Uyghurs,

women's rights advocates, and students. Providing the Chinese government with ready access to user data, as required by Chinese law, would make Google complicit in oppression and human rights abuses. Dragonfly would also enable

censorship and government-directed disinformation, and destabilize the ground truth on which popular deliberation and dissent rely. Given the Chinese government's reported suppression of dissident voices, such controls would likely be used to silence

marginalized people, and favor information that promotes government interests. Many of us accepted employment at Google with the company's values in mind, including its previous position on Chinese censorship and surveillance, and

an understanding that Google was a company willing to place its values above its profits. After a year of disappointments including Project Maven, Dragonfly, and Google's support for abusers, we no longer believe this is the case. This is why we're

taking a stand. We join with Amnesty International in demanding that Google cancel Dragonfly. We also demand that leadership commit to transparency, clear communication, and real accountability. Google is too powerful not to be

held accountable. We deserve to know what we're building and we deserve a say in these significant decisions. Signed by 478 Google employees

|

| |

Parliamentary group defines islamophobia as a type of racism that targets muslim identity

|

|

|

| 28th November 2018

|

|

| See article from

theconversation.com

See APPG report [pdf] from static1.squarespace.com

|

The All Party Parliamentary Group (APPG) on British Muslims has made history by putting forward the first working definition of Islamophobia in the UK. Its report, Islamophobia Defined, states: Islamophobia is

rooted in racism and is a type of racism that targets expressions of Muslimness or perceived Muslimness.

The culmination of almost two years of consultation and evidence gathering, the definition takes into account the views of

different organisations, politicians, faith leaders, academics and communities from across the country. No doubt the term will be still be used as an accusation intended to silence people from mentioning negative traits associated with islam.

|

| |

Australian parliament passes new law with wide ranging blocking of copyright infringing websites

|

|

|

| 28th November 2018

|

|

| See article from torrentfreak.com |

The Australian Parliament has passed controversial amendments to copyright law. There will now be a tightened site-blocking regime that will tackle mirrors and proxies more effectively, restrict the appearance of blocked sites in Google search, and

introduce the possibility of blocking dual-use cyberlocker type sites. Section 115a of Australia's Copyright Act allows copyright holders to apply for injunctions to force ISPs to prevent subscribers from accessing pirate sites. While rightsholders

say that it's been effective to a point, they have lobbied hard for improvements. The resulting Copyright Amendment (Online Infringement) Bill 2018 contained proposals to close the loopholes. After receiving endorsement from the Senate earlier

this week, the legislation was today approved by Parliament. Once the legislation comes into force, proxy and mirror sites that appear after an injunction against a pirate site has been granted can be blocked by ISPs without the parties having to

return to court. Assurances have been given, however, that the court will retain some oversight. Search engines, such as Google and Bing, will also be affected. Accused of providing backdoor access to sites that have already been blocked, search

providers will now have to remove or demote links to overseas-based infringing sites, along with their proxies and mirrors. The Australian Government will review the effectiveness of the new amendments in two years' time. |

| |

BFI to refuse funds to films with facially scarred villains

|

|

|

| 27th November 2018

|

|

| See article from telegraph.co.uk |

Films that have facially-scarred villains will no longer receive funding from the British Film Institute, the organisation has announced, as part of a campaign to remove the stigma around disfigurement. From Darth Vader to Scar in The Lion

King, film-makers have long made a link between physical disfigurement and evil. The BFI is backing the #IAmNotYourVillain campaign launched by the group Changing Faces. Ben Roberts, the BFI's deputy CEO, said: Film is a catalyst for change

and that is why we are committing to not having negative representations depicted through scars or facial difference in the films we fund, says Ben Roberts, the BFI's deputy CEO. This campaign speaks directly to the

criteria in the BFI diversity standards, which call for meaningful representations on screen. We fully support Changing Faces's #IAmNotYourVillain campaign, and urge the rest of the film industry to do the same.

|

| |

And people around him say shh! You can't write books about muslim terrorists!

|

|

|

| 26th November

2018

|

|

| Thanks to Nick

See

article from theguardian.com |

A Suicide Bomber Sits in the Library , a new comic book has been pulled from publication at the behest of the PC lynch mob. The graphic novel, written by the Newbery medal-winning author Jack Gantos and illustrated by Sandman artist Dave

McKean, it is part of a series linked by 'sitting' and was due to be released in May 2019. The book is pretty much a morality tale. It follows a suicide bomber who changes his mind once he discovers the joys of reading. A group called the Asian

Author Alliance responded with a call for the book to be censored. In an open letter, 1000 signatories said the book was steeped in Islamophobia and profound ignorance. The letter continued : The simple fact is that

today, the biggest terrorist threat in the US is white supremacy. In publishing A Suicide Bomber Sits in the Library, [publisher] Abrams is wilfully fear-mongering and spreading harmful stereotypes in a failed attempt to show the power of story.

As criticism of the comic spread online, McKean, one of the UK's most acclaimed comics illustrators, responded , saying that the book was firmly on the side of literacy, empathy and non-violence. He tweeted:

The premise of the book is that a boy uses his mind and faith to decide for himself that violence is not the right course I had just this anxiety when the script came to me. I just hoped we'd moved beyond each

of us only being able to talk to and from our own little cultural bubble. Abrams announced the cancellation of the comic saying in a statement that it had decided to withdraw it, with the support of McKean and Gantos:

While the intention of the book was to help broaden a discussion about the power of literature to change lives for the better, we recognise the harm and offence felt by many at a time when stereotypes breed division, rather than

discourse. |

| |

Notable film director leaves a fine legacy of films involving a tussle with censors

|

|

|

| 26th November 2018

|

|

| See article from en.wikipedia.org |

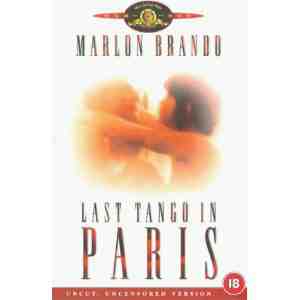

Bernardo Bertolucci was an Italian film director and screenwriter, whose films include The Conformist, Last

Tango in Paris, 1900, The Last Emperor (for which he won the Academy Award for Best Director and the Academy Award for Best Adapted Screenplay), The Sheltering Sky, Little Buddha, Stealing Beauty and The Dreamers. In recognition of his

work, he was presented with the inaugural Honorary Palme d'Or Award at the opening ceremony of the 2011 Cannes Film Festival. Bernardo Bertolucci was an Italian film director and screenwriter, whose films include The Conformist, Last

Tango in Paris, 1900, The Last Emperor (for which he won the Academy Award for Best Director and the Academy Award for Best Adapted Screenplay), The Sheltering Sky, Little Buddha, Stealing Beauty and The Dreamers. In recognition of his

work, he was presented with the inaugural Honorary Palme d'Or Award at the opening ceremony of the 2011 Cannes Film Festival. He died on the 26th November 2018. He leaves a fine legacy of clashes with film censors: Last Tango in Paris is a

1972 France / Italy romance by Bernardo Bertolucci.

Starring Marlon Brando, Maria Schneider and Maria Michi.

Cut by the BBFC for an X rated 1973 cinema release, although a few local authorities banned the film anyway. Later passed uncut for all releases since 1978. Also cut for an R rating in the US although the NC-17 rated version is uncut.

See further details at Melon Farmers Film Cuts: Last Tango in Paris 1900 is a 1976 Italy/France/West Germany drama by Bernardo Bertolucci.

With Robert De

Niro, Gérard Depardieu and Dominique Sanda.

Exists in 3 versions, a cut International Theatrical Version, the full length Italian Version and the Italian Version without a real sex masturbation scene. See further details at

Melon Farmers Film Cuts: 1900 The Dreamers is a 2003 UK / France / Italy romance by Bernardo Bertolucci.

Starring Michael Pitt, Louis Garrel and Eva Green.

Uncut in the UK for 18 rated cinema and video release. The R rated version was cut in the US for an MPAA R rating but the Unrated version is uncut. See further details at Melon

Farmers Film Cuts: The Dreamers

|

| |

Notable film director leaves a fine legacy of films involving a tussle with censors

|

|

|

| 26th November 2018

|

|

| See article from en.wikipedia.org

See

Nicolas Roegs painterly, sinister films had a profound impact on cinema. From spiked-online.com

|

Nicolas Roeg CBE BSC was an English film director and cinematographer, best known for directing Performance (1970), Walkabout (1971), Don't Look Now (1973), The Man Who Fell to Earth (1976), Bad Timing (1980), and T he Witches (1990).

Making his directorial debut 23 years after his entry into the film business, Roeg quickly became known for an idiosyncratic visual and narrative style, characterized by the use of disjointed and disorienting editing.For this reason, he was

considered a highly influential filmmaker, with such directors as Steven Soderbergh, Christopher Nolan, and Danny Boyle citing him as such. In 1999, the British Film Institute acknowledged Roeg's importance in the British film industry by

respectively naming Don't Look Now and Performance the 8th and 48th greatest British films of all time in its Top 100 British films poll. He died on the 23rd November 2018. He leaves a fine legacy of clashes with film censors: Performance

is a 1970 UK crime drama by Donald Cammell & Nicolas Roeg.

With James Fox, Mick Jagger and Anita Pallenberg.

History of censorship at the hands of the BBFC and Its own distributor,

Warner. BBFC cuts later waived but the extensive footage removed by Warners is still missing. See further details at Melon Farmers Film Cuts: Performance

Walkabout is

a 1971 UK adventure drama by Nicolas Roeg.

With Jenny Agutter, David Gulpilil and Luc Roeg.

Uncut by the BBFC albeit after an appeal against proposed cuts for the 1971 cinema release. There exists a shortened version in the US, although the Unrated version is uncut. See further details at

Melon Farmers Film Cuts: Walkabout

Bad Timing is a 1980 UK mystery thriller by Nicolas Roeg.

With Art Garfunkel, Theresa Russell and Harvey Keitel.

Re-edited to separate a juxtaposed image of a child with an image of

lovemaking. This cut has persisted into all subsequent releases.

The Witches is a 1990 UK / USA family horror fantasy by Nicolas Roeg.

Starring Anjelica Huston, Mai Zetterling and Jasen Fisher.

Cut by the BBFC for a PG rating. There is an uncut European version.

Uncut in the US but with an alternative happy ending See further details at Melon Farmers Film Cuts: The Witches

|

| |

Russia considers increasing fines as Google refuses to comply with Russia's list of banned websites

|

|

|

| 26th November 2018

|

|

| See article from theverge.com |

Russia's state censors have formally accused Google of breaking the law by not removing links to websites that are banned in the country. Roskomnadzor, the state communications censor, said in a statement that the company had not connected to a

database of banned sources in the country, leaving it out of compliance. The potential penalty that Google could face is currently 700,000 roubles, or about $10,000. But Reuters reports that the Russian government has been considering more drastic

actions, including fining companies up to 1 percent of annual revenue for failing to comply with similar laws. |

| |

|

|

|

| 26th November

2018

|

|

|

Beyond the massive technical challenge, filters are a lazy alternative to effective sex education. By Lux Alptraum See

article from theverge.com |

| |

Twitter outlaws misgendering or deadnaming of trans people

|

|

|

| 25th November 2018

|

|

| See article from thegayuk.com

See

censorship rules from help.twitter.com

See reponse from

The Britisher from youtu.be |

Deadnaming and misgendering could now get you a suspension from Twitter as it looks to sure up its safeguarding policy for people in the protected transgender category. Twitter's recently updated censorship policy now reads:

Repeated and/or non-consensual slurs, epithets, racist and sexist tropes, or other content that degrades someone We prohibit targeting individuals with repeated slurs, tropes or other content that

intends to dehumanize, degrade or reinforce negative or harmful stereotypes about a protected category. This includes targeted misgendering or deadnaming of transgender individuals. According to the Ofxord English dictionary

misgendering means: Refer to (someone, especially a transgender person) using a word, especially a pronoun or form of address, that does not correctly reflect the gender with which they identify.

According to thegayuk.com:

Deadnaming is when a person refers to someone by a previous name, it could be done with malice or by accident. It mostly affects transgender people who have changed their name during their transition.

|

| |

Ofcom investigates a complaint about a Chinese propaganda channel broadcasting a confession said to be extracted under duress.

|

|

|

| 25th November 2018

|

|

| See article from japantimes.co.jp

|

A British corruption investigator has asked the UK's TV censor Ofcomr to revoke Chinese state media's broadcast license for helping to stage his allegedly forced confession and subsequent jailing in China. Peter Humphrey was arrested for his work in

pursuing corruption in the pharmaceutical sector. He was sentenced to over two years in prison by a Shanghai court in 2014. He served hi time and was then deported. He has now submitted a complaint to Ofcom about China Central Television (CCTV)

for its alleged role in the episode. He said that CCTV journalists cooperated with police to extract, record, make post-production and then broadcast his confession worldwide through its international propaganda channels. Humphrey accuses Chinese

authorities of drugging him and locking him in a chair inside a small metal cage to conduct the confession saying: China Central Television (CCTV) journalists then aimed their cameras at me and recorded me reading out

the answers already prepared for me by the police, his complaint added.

A spokesman for Ofcom confirmed it had received a complaint which we are assessing as a priority. Ofcom has previously taken action against the broadcast of

'confessions' extracted under duress. |

|

|